What Is Google BERT?

So, What Is It?

Bidirectional Encoder Representations from Transformers, or BERT for short. Google have confirmed that this update will tackle more complicated queries. It’s a deep learning algorithm which is related to natural language processing.

It helps machines understand what words in a sentence mean, but with actual context rather than just seeing the words. Think of right, as in the direction, or the right to access clean water. Google’s Search Engine is now able to better decipher the context behind the query, which will hopefully return better results for the user.

Why Is It Important?

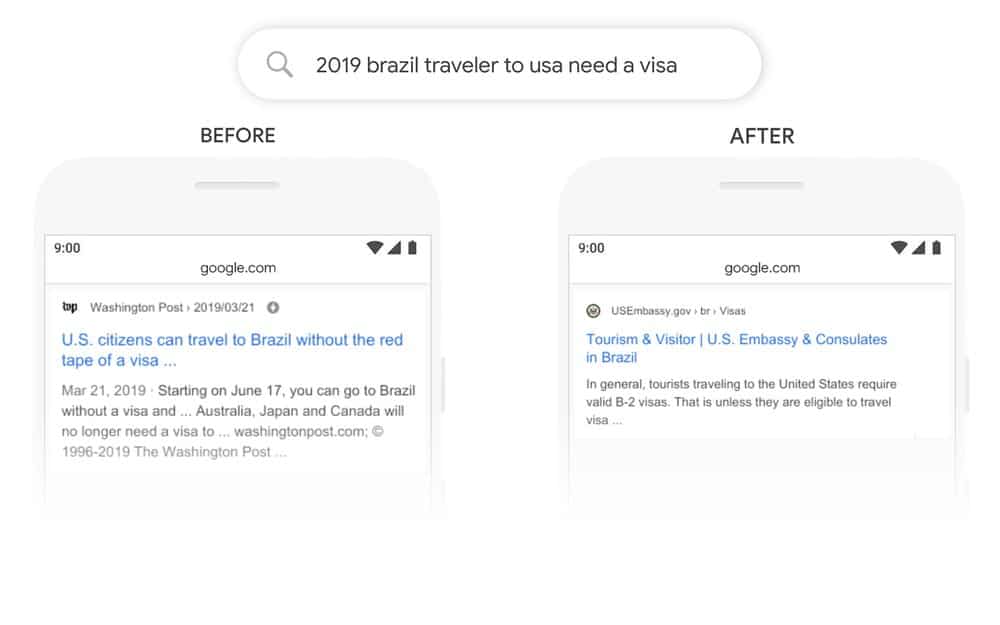

This is a major Google Algorithm update, which will approximately affect 10% of all search queries going forward. While 10% doesn’t seem like a whole lot, there are billions of searches a day through Google alone. This makes that 10% seem much more important. This update will allow Google to return more relevant results for longer and more complicated queries such as the ones below:

So, What Does BERT Mean For SEO?

Well going it forward it means that Google will serve more accurate and relevant results for more complicated and question orientated queries.

Which means that if your content is poorly written, you’ll really struggle to rank against sites with well written and informative content.

BERT will more than likely evolve in the coming years to influence a higher percentage of Search Queries.

We would suggest going over your content and making sure it’s structured well and is relevant to your services and customers.

Are you struggling to get your site to rank on Google? If you’re looking for help, our experts at Square Media are happy to assist you. Whether you just need some advice, or if you need someone to take over your Search Engine Optimisation, we’re more than happy to help! Get in touch with us today!